One of the most pertinent questions in risk management has been: How much do you stand to lose, over a certain period and with a certain probability? The most common answer is Value at Risk (VaR), a risk measure that expresses itself as one number. What is that number and what does it stand for?

Assumptions

In order to interpret this number we first have to assume that:

- Everything assumed in the (VaR) calculations/process is true

- All approximations made are accurate

- The future follows the past and whatever risk you are analyzing only exists for the specified (certain) period

If you are reasonably comfortable with these assumptions then the one number to answer your question is Value at Risk. Or VaR for short. VaR is a worst case loss with limits on the time period and probability.

Issues with VaR

Value at Risk (VaR) has been around for quite some time now. However, the effectiveness of the concept has been the subject of persistent debate. Like all tools, the efficacy of VaR is in its use. Some of the key issues raised in current literature about VaR are:

- Is the one number sufficient by itself to completely capture the risk in a position?

- Whether the users of VaR understand the limitations (of the tool) and the implications of those limitations?

- Risk management is concerned with extreme events or large deviations from what is expected. The most common tool we use for measuring the above is variance, an average (of sorts) of all the deviations from the mean. Although we use it is as the key tool for calculating VaR, it is not the most appropriate. Higher order factors that measure symmetry or length & thickness of tails would be more accurate.

- VaR uses data from all events to evaluate the impact of extreme events. VaR is forced to do this because, by definition, extreme events do not occur frequently enough to generate sufficient data. The downside is that extreme events have much higher means and variances. This means that if VaR (somehow) did use extreme events, it would lead to a much higher Value at Risk estimate.

- Given that the object of risk management is to understand risk exposures and neutralize them, there is a strong emphasis on supplementing VAR with scenario analysis or sensitivity testing.

What VaR calculates

Value at Risk (VaR) is a market risk measurement approach that uses historical market trends and volatilities to estimate the likelihood that a given portfolio’s losses will exceed a certain amount.

In one sense it is an extension (or even a simplification, depending on who you ask) of the probability of shortfall calculations model used in early ’80s by pension fund managers to estimate the probability that they will eat into surplus as well as the probability of ruin models used by insurance companies for the last 200 years.

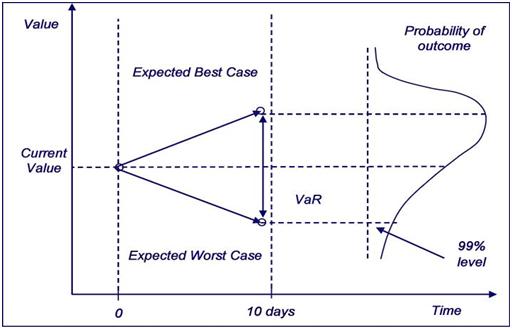

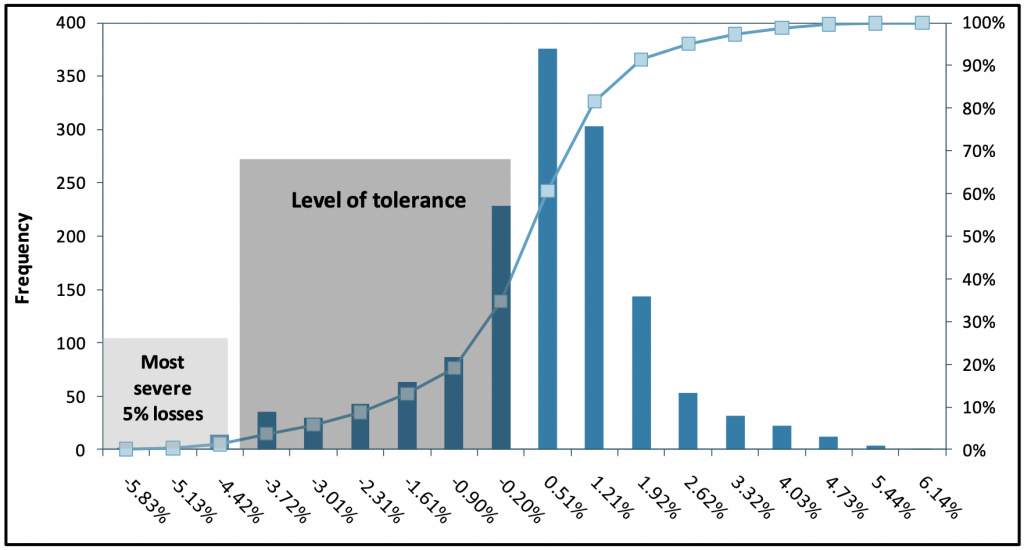

VaR measures the largest loss that a portfolio or position is likely to suffer over a holding period (usually 1 to 10 days) with a given probability (confidence level). As an example, assuming a 99% confidence level, a VaR of 1 million US dollars means that there is only a one percent chance that losses will exceed the 1 million US dollar figure over the next ten days. It is also “fashionable” to refer to this loss as the one day in 100 days loss.

In our next post, we look at some of the methods for calculating VaR.

Comments are closed.